Robots and Artificial Intelligence

Artificial intelligence (AI) is arguably the most exciting field in robotics. It's certainly the most controversial: Everybody agrees that a robot can work in an assembly line, but there's no consensus on whether a robot can ever be intelligent.

Like the term "robot" itself, artificial intelligence is hard to define. Ultimate AI would be a recreation of the human thought process — a human-made machine with our intellectual abilities. This would include the ability to learn just about anything, the ability to reason, the ability to use language and the ability to formulate original ideas. Roboticists are nowhere near achieving this level of artificial intelligence, but they have made a lot of progress with more limited AI. Today's AI machines can replicate some specific elements of intellectual ability.

Advertisement

Computers can already solve problems in limited realms. The basic idea of AI problem-solving is simple, though its execution is complicated. First, the AI robot or computer gathers facts about a situation through sensors or human input. The computer compares this information to stored data and decides what the information signifies. The computer runs through various possible actions and predicts which action will be most successful based on the collected information. For the most part, the computer can only solve problems it's programmed to solve — it doesn't have any generalized analytical ability. Chess computers are one example of this sort of machine.

Some modern robots also can learn in a limited capacity. Learning robots recognize if a certain action (moving its legs in a certain way, for instance) achieves a desired result (navigating an obstacle). The robot stores this information and attempts the successful action the next time it encounters the same situation. Robotic vacuums learn the layout of a room, but they're built for vacuuming and nothing else.

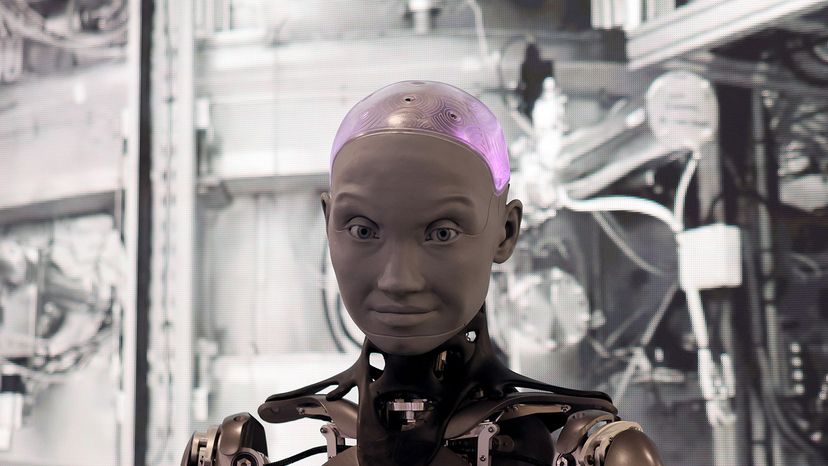

Some robots can interact socially. Kismet, a robot created in 1998 at M.I.T.'s Computer Science & Artificial Intelligence Lab (CSAIL), recognized human body language and voice inflection and responded appropriately. Since then, interactive robots have become available commercially, and some are being used as companions for senior citizens. Although the robots are helpful for cleaning and mobility assistance, adding interactivity helps reduce seniors' social isolation.

The real challenge of AI is to understand how natural intelligence works. Developing AI isn't like building an artificial heart — scientists don't have a simple, concrete model to work from. We do know that the brain contains billions and billions of neurons, and that we think and learn by establishing electrical connections between different neurons. But we don't know exactly how all of these connections add up to higher reasoning, or even low-level operations. The complex circuitry seems incomprehensible.

Because of this, AI research is largely theoretical. Scientists hypothesize on how and why we learn and think, and they experiment with their ideas using robots. M.I.T. CSAIL researchers focus on humanoid robots because they feel that being able to experience the world like a human is essential to developing human-like intelligence. It also makes it easier for people to interact with the robots, which potentially makes it easier for the robot to learn.

Just as physical robotic design is a handy tool for understanding animal and human anatomy, AI research is useful for understanding how natural intelligence works. For some roboticists, this insight is the ultimate goal of designing robots. Others envision a world where we live side by side with intelligent machines and use a variety of lesser robots for manual labor, health care and communication. Some robotics experts predict that robotic evolution will ultimately turn us into cyborgs — humans integrated with machines. Conceivably, people in the future could load their minds into a sturdy robot and live for thousands of years!

In any case, robots will certainly play a larger role in our daily lives in the future. In the coming decades, robots will gradually move out of the industrial and scientific worlds and into daily life, in the same way that computers spread to the home in the 1980s.

Related Articles

More Great Links

Sources

- Abrams, Michael. "A Robot Crab to Clean the Ocean." The American Society of Mechanical Engineers. Sept. 24, 2019. (Nov. 27, 2021) https://www.asme.org/topics-resources/content/a-robot-crab-to-clean-the-ocean

- Ackerman, Evan. "Atlas Shows Most Impressive Parkour Skills We've Ever Seen." IEEE Spectrum. Aug. 17, 2021. (Nov. 27, 2021) https://spectrum.ieee.org/boston-dynamics-atlas-parkour

- Ackerman, Evan. "Piaggio's Cargo Robot Uses Visual SLAM to Follow You Anywhere." IEEE Spectrum. Feb. 2, 2017. (Dec. 2, 2021) https://spectrum.ieee.org/piaggio-cargo-robot

- Ackerman, Evan. "What's Going on with Amazon's "High-tech" Warehouse Robots?" IEEE Spectrum. June 23, 2021. (Nov. 28, 2021) https://spectrum.ieee.org/whats-going-on-with-amazons-hightech-warehouse-robots

- Agnihotri, Nikhil. "Stepper Motor: Basics, Types and Working." Engineers Garage." Feb. 18, 2011. (Dec. 2, 2021) https://www.engineersgarage.com/stepper-motor-basics-types-and-working/

- Baguley, Richard and McDonald, Colin. "Appliance Science: How robotic vacuums navigate." CNET. Oct. 6, 2015. (Nov. 28, 2021) https://www.cnet.com/home/kitchen-and-household/appliance-science-how-robotic-vacuums-navigate/

- BattleBots. (Dec. 2, 2021) https://battlebots.com/

- Boston Dynamics. "Spot for Industrial Inspections." (Nov. 27, 2021) https://www.bostondynamics.com/solutions/inspection

- Boston Dynamics. "Spot." (Dec. 2, 2021) https://www.bostondynamics.com/products/spot

- Boston Dynamics Support. "Spot CAM Specifications, Configurations, Operation and Troubleshooting." June 4, 2021. (Nov. 28, 2021) https://support.bostondynamics.com/s/article/Spot-CAM-Spot-CAM-Spot-CAM-IR

- Böttcher, Sven. "Principles of Robot Locomotion." Southern Indiana University. (Nov. 27, 2021) http://www2.cs.siu.edu/~hexmoor/classes/CS404-S09/RobotLocomotion.pdf

- Breazeal, Cynthia L., Ostrowski, Anastasia K., and Park, Hae Won. "Designing Social Robots for Older Adults." The Bridge, vol. 49, No. 1. March 15, 2019. (Nov. 28, 2021) https://www.nae.edu/Publications/Bridge/205212/208332.aspx

- Burro. "Robots." Burro.ai. (Nov. 28, 2021) https://burro.ai/robots/

- Carnegie Mellon University Robotics Institute. "Medical Snake Robot." (Nov. 27, 2021) https://medrobotics.ri.cmu.edu/node/128447

- Ceruzzi, Paul. "The Real Technology Behind '2001's HAL." Smithsonian National Air and Space Museum. May 11, 2018. (Dec. 2, 2021) https://airandspace.si.edu/stories/editorial/real-technology-behind-2001s-hal

- Choset, Howie. "Medical Snake Robot." Carnegie Mellon University Robotics Institute Medical Robotics. (Dec. 12, 2021) https://medrobotics.ri.cmu.edu/node/128447

- Coxworth, Ben. "Tracked Bottom-crawling Robot Gathers Valuable Deep-sea Data." New Atlas. Nov. 5, 2021. (Nov. 27, 2021) https://newatlas.com/robotics/benthic-rover-2-tracked-undersea-robot/

- Devjanin, E.A.; Gurfinkel, V.S.; Gurfinkel, E.V.; Kartashev, V.A.; Lensky, A.V.; Shneider; A. Yu; Shtilman, L.G. "The Six-Legged Walking Robot Capable of Terrain Adaptation." Mechanism and Machine Theory, vol. 8, issue 4, pp. 257-260. 1983. (Nov. 27, 2021) https://www.sciencedirect.com/science/article/abs/pii/0094114X83901143

- Eelume. "The Eelume Concept and Value Proposition." (Dec. 2, 2021) https://eelume.com/#system-and-product

- FAQ for alt.books.isaac-asimov. "Frequently Asked Questions about Isaac Asimov." Asimovonline.com July 11, 2014. (Dec. 2, 2021) http://www.asimovonline.com/asimov_FAQ.html

- Fell, Andy. "Robot Arm Tastes with Engineered Bacteria." University of California Davis. June 26, 2019. (Nov. 21, 2021) https://www.ucdavis.edu/news/robot-arm-tastes-engineered-bacteria

- Greicius, Tony. "Perseverance's Robotic Arm Starts Conducting Science." Nasa.gov. May 12, 2021. (Nov. 27, 2021) https://www.nasa.gov/feature/jpl/perseverance-s-robotic-arm-starts-conducting-science

- Guizzo, Erico. "What is a Robot?" Robots: Your guide to the world of robotics. IEEE. May 28, 2020. (Nov. 27, 2021) https://robots.ieee.org/learn/what-is-a-robot/

- Harper, Jeffrey. "How does a Roomba work?" The Chicago Tribune. March 25, 2021. (Nov. 21, 2021) https://www.chicagotribune.com/consumer-reviews/sns-bestreviews-home-roomba-work-20210325-c6wj2rf7uncrbc4zc76tiqwkou-story.html

- Hurley, Billy. "Four-Legged 'Swarm' Robots Traverse Tough Terrain — Together." Tech Briefs. Oct. 28, 2021. (Nov. 27, 2021) https://www.techbriefs.com/component/content/article/tb/stories/blog/40216

- IEEE. "Kismet." Robots: Your guide to the world of robotics. (Nov. 28, 2021) https://robots.ieee.org/robots/kismet/

- Instructables. (Dec. 2, 2021) https://www.instructables.com/

- iRobot. "Roomba." (Dec. 2, 2021) https://www.irobot.com/roomba

- Johnson, Khari. "These Robots Follow You to Learn Where to Go." Wired. Nov. 5, 2021. (Nov. 28, 2021) https://www.wired.com/story/robots-follow-learn-where-go/

- Jordan, John M. "The Czech Play That Gave Us the Word 'Robot.'" The MIT Press Reader. July 29, 2019. (Nov. 21, 2021) https://thereader.mitpress.mit.edu/origin-word-robot-rur/

- Kaur, Kalwinder. "Basic Robotics - Power Source for Robots." AZO Robotics. Aug. 8, 2013. (Nov. 21, 2021) https://www.azorobotics.com/Article.aspx?ArticleID=139

- Kumar, V. "1. Introduction to Robotics." University of Pennsylvania School of Engineering and Applied Science. Dec. 31, 2001. (Nov. 21, 2021) https://www.seas.upenn.edu/~meam520/notes02/IntroRobotics1.pdf

- Laughlin, Charles. "The Evolution of Cyborg Consciousness." Anthropology of Consciousness, vol. 8, No. 4, pp. 144-159. January 2008. (Nov. 28, 2021) https://doi.org/10.1525/ac.1997.8.4.144

- LEGO. "MINDSTORMS." (Dec. 2, 2021) https://www.lego.com/en-us/themes/mindstorms/about

- Makerspace Directory. (Dec. 2, 2021) https://makerspacedir.com/

- mars.nasa.gov. "The Detective Aboard NASA's Perseverance Rover – NASA's Mars Exploration Program." (Nov. 27, 2021) https://mars.nasa.gov/news/8678/the-detective-aboard-nasas-perseverance-rover/

- mars.nasa.gov. "NASA's New Mars Rover Will Use X-Rays to Hunt Fossils – NASA's Mars Exploration Program." (Nov. 27, 2021) https://mars.nasa.gov/news/8759/nasas-new-mars-rover-will-use-x-rays-to-hunt-fossils/

- Martinez, Sylvia. "The Maker Movement: A Learning Revolution." ISTE Blog. Feb. 11, 2019. (Dec. 2, 2021) https://www.iste.org/explore/In-the-classroom/The-maker-movement-A-learning-revolution

- Maxwell, Rebecca. "Robotic Mapping: Simultaneous Localization and Mapping." GIS Lounge. Jan. 15, 2013. (Nov. 28, 2021) https://www.gislounge.com/robotic-mapping-simultaneous-localization-and-mapping-slam/

- Mayo Clinic Staff. "Robotic Surgery." (Dec. 2, 2021) https://www.mayoclinic.org/tests-procedures/robotic-surgery/about/pac-20394974

- Miso Robotics. (Dec. 2, 2021) https://invest.misorobotics.com/

- M.I.T. Computer Science & Artificial Intelligence Lab. (Dec. 2, 2021) https://www.csail.mit.edu/

- M.I.T. Computer Science & Artificial Intelligence Lab."Cog Project Overview." (Nov. 28, 2021) http://groups.csail.mit.edu/lbr/humanoid-robotics-group/cog/overview.html

- Moon, Mariella. "The Marines Start Training Google's 160-pound Robo-dog Spot." Engadget. Nov. 22, 2015. (Nov. 27, 2021) https://www.engadget.com/2015-11-21-spot-robot-dog-marine-training.html

- NASA Jet Propulsion Laboratory. "NASA Advances Plans to Bring Samples Back from Mars." YouTube.com. Feb. 10, 2020. (Nov. 27, 2021) https://mars.nasa.gov/news/8759/nasas-new-mars-rover-will-use-x-rays-to-hunt-fossils/

- NASA's Exploration & In-space Services. "Robotic Servicing Arm." (Dec. 2, 2021) https://nexis.gsfc.nasa.gov/robotic_servicing_arm.html

- NBC Sports. "Dazzling Drone Display During Olympic Opening Ceremony." YouTube. July 24, 2021. (Nov. 27, 2021) https://www.youtube.com/watch?v=t8Zr6qpKPgs

- Okibo. "Our Robot." (Dec. 2, 2021) https://okibo.com/our-robot/

- Pettersen, Kristin Y. "Snake Robots." Annual Reviews in Control, vol. 44, pp. 19-44. 2017. (Nov. 21, 2021) https://www.sciencedirect.com/science/article/pii/S1367578817301050

- Rhoeby Dynamics. "Low-cost, LiDAR-based Navigation for Mobile Robotics." Robotics Tomorrow. Nov. 26, 2015. (Nov. 28, 2021) https://www.roboticstomorrow.com/article/2015/11/low-cost-lidar-based-navigation-for-mobile-robotics/7270

- RoboCup. (Dec. 2, 2021) https://www.robocup.org/

- RoboteQ. "Optical Flow Sensor for Mobile Robots." Nidec Motor Corporation. (Dec. 2, 2021) https://www.roboteq.com/all-products/optical-flow-sensor-for-mobile-robots

- Sarmah, Harshajit. "Famous Bomb Defusing Robots in the World." Analytics India Mag. Jan. 24, 2019. (Dec. 2, 2021) https://analyticsindiamag.com/famous-bomb-defusing-robots-in-the-world/

- ScienceDirect. "Industrial Robots." (Dec. 2, 2021) https://www.sciencedirect.com/topics/engineering/industrial-robot

- ScienceDirect. "Robot Locomotion." (Nov. 27, 2021) https://www.sciencedirect.com/topics/engineering/robot-locomotion

- Soft and Micro Robotics Laboratory — Research Group of Professor Kevin Chen. "Aerial Robot Powered by Soft Actuators." (Nov. 27, 2021) https://www.rle.mit.edu/smrl/research/aerial-robot-powered-by-soft-actuators/

- Smith, Adam. "Where Linear Actuators are Used the Most." Industry Tap. Nov. 23, 2015. (Nov. 22, 2021) https://www.industrytap.com/linear-actuators-used/32935

- Smith, Marshall. "Where Linear Actuators are Used the Most." IndustryTap. Nov. 23, 2015. (Dec. 2, 2021) https://www.industrytap.com/linear-actuators-used/32935

- Sony. "Sony Launches Four-legged Entertainment Robot." May 11, 1999. (Dec. 2, 2021) https://www.sony.com/en/SonyInfo/News/Press_Archive/199905/99-046/

- Star Trek. "Data." (Dec. 2, 2021) https://www.startrek.com/database_article/data

- StarWars.com. "R2-D2." Databank. (Dec. 2, 2021) https://www.starwars.com/databank/r2-d2

- StarWars.com. "C-3PO." Databank. (Dec. 2, 2021) https://www.starwars.com/databank/c-3po

- Thingiverse. MakerBot. (Dec. 2, 2021) https://www.thingiverse.com/

- Tuttle, John. "The Original Series Robots Which Led up to the Robot in Netflix's Lost in Space." Medium.com. July 25, 2018. (Dec. 2, 2021) https://medium.com/of-intellect-and-interest/the-original-series-robots-which-led-up-to-the-robot-in-netflixs-lost-in-space-2a23028b54f3