We can't seem to find the page you're looking for.

But here are some great stories about people and places [or other things] that are lost.

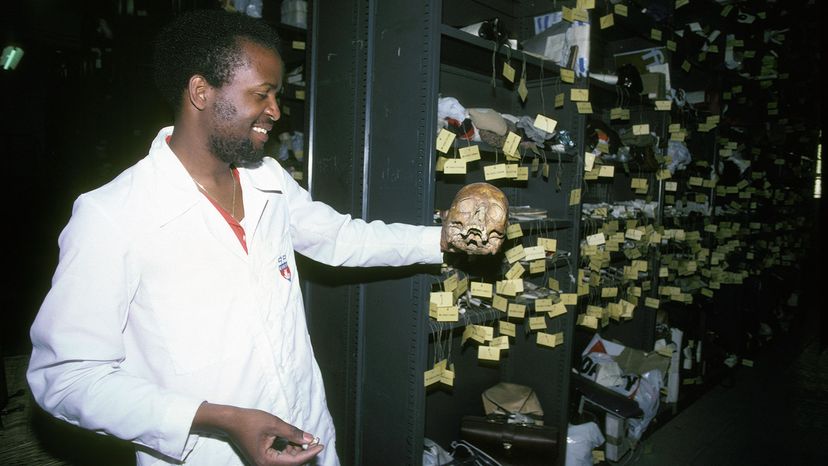

From Human Skulls to Handguns, the Paris Lost and Found Has Seen It All

9 of the Weirdest Lost-and-found Items in the World

10 Things That Went Missing Without a Trace

You can also head back to our homepage, use our site search or browse some of our latest and greatest below.

How to Make a Number Line for the Classroom

Was Gary Francis Poste the Zodiac Killer? We Can't Be Sure

What Was the Largest Wave Ever Recorded?

Personification Examples to Make Your Writing More Interesting

FANBOYS: A Mnemonic for Coordinating Conjunctions

Understanding the Profound Meaning of Angel Number 909

The Mystical Meaning of Angel Number 727