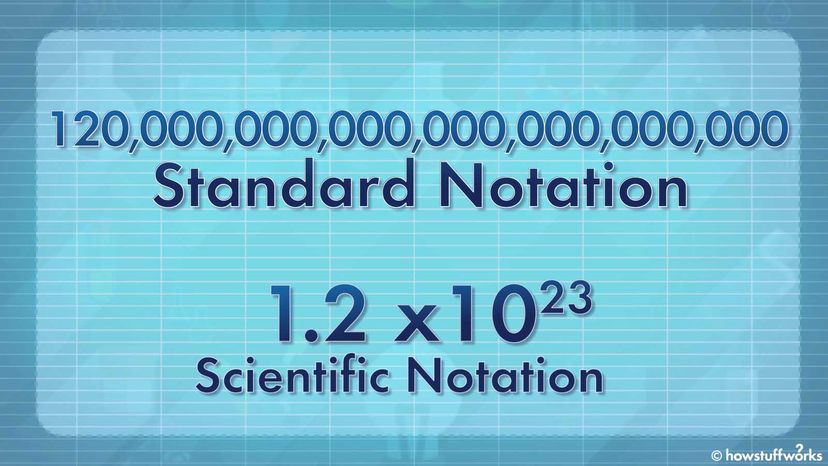

Scientific notation is a concise and standardized way of expressing extremely large or tiny decimal numbers efficiently. This type of notation has two essential components: a coefficient (typically a number between 1 and 10) and an exponent (an integer power of 10), where the coefficient's absolute value signifies the number's magnitude.

Think of scientific notation as a recipe card. The recipe card's title and picture (analogous to the coefficient) give you a general idea of the dish, whether it's large or small, but they don't provide the detailed instructions. The cooking time (similar to the exponent) tells you exactly how long to cook the dish, guiding you to the right outcome.

So, the coefficient's absolute value is like glancing at the recipe card's title and picture to understand the dish's magnitude, while the exponent is like the cooking time, directing you precisely for the desired result. Let's take a closer look at coefficients.

Coefficients

In simple terms, a coefficient is a number that is multiplied by another number or a variable in a mathematical expression. It's like a numerical factor that tells you how many times to multiply the variable or number it's associated with.

Exponents

An exponent is a small number that tells you how many times to multiply a larger number (called the base) by itself. For example, in the expression 23, the base is 2, and the exponent is 3. It means you should multiply 2 by itself 3 times: 2 x 2 x 2, which equals 8.

Exponents are used to represent repeated multiplication in a more concise way, making it easier to work with very small or very significant digits in mathematics.

More Examples of Scientific Notation

As any bank teller should know, 100 is equal to 10 x 10. But instead of writing "10 x 10" out, we could save ourselves some ink and write 10² instead. What's that itty-bitty "2" next to the number 10?

That's what's called an exponent. And the full-sized number (i.e., 10) to its immediate left is known as the base. The exponent tells you how many times you need to multiply the base by itself. So 10² is just another way of writing 10 x 10. Similarly, 10³ means 10 x 10 x 10, which equals 1,000.

(By the way, when solving math problems on a computer or scientific calculator, the caret symbol — or ^ — is sometimes used to denote exponents. Hence, 10² can also be written as 10^2, but we'll save that conversation for another day.)

Scientific notation relies on exponents. Consider the number 2,000. If you wanted to express this sum in scientific notation, you'd write 2.0 x 103. When you use scientific notation, what you're really doing is taking a number (i.e., 2.0) and multiplying it by a specific exponent of 10 (i.e., 10^3).

The exponent (i.e., 3) signifies that you're multiplying the coefficient (i.e., 2.0) by 10 raised to the power of 3, effectively moving the decimal point three places to the right, resulting in the same sum we started out with: 2,000.