Want a robot to cook your dinner, do your homework, clean your house, or get your groceries? Robots already do a lot of the jobs that we humans don't want to do, can't do, or simply can't do as well as our robotic counterparts. In factories around the world, disembodied robot arms assemble cars, delicately place candies into their boxes, and do all sorts of tedious jobs. There are even a handful of robots on the market whose sole job is to vacuum the floor or mow your lawn.

Advertisement

Many of us grew up watching robots on TV and in the movies: There was Rosie, the Jetsons' robot housekeeper; Data, the android crewmember on "Star Trek: The Next Generation"; and of course, C3PO from "Star Wars." The robots being created today aren't quite in the realm of Data or C3PO, but there have been some amazing advances in their technology. Honda engineers have been busy creating the ASIMO robot for more than 20 years. In this article, we'll find out what makes ASIMO the most advanced humanoid robot to date.

The Honda Motor Company developed ASIMO, which stands for Advanced Step in Innovative Mobility, and is the most advanced humanoid robot in the world. According to the ASIMO Web site, ASIMO is the first humanoid robot in the world that can walk independently and climb stairs.

In addition to ASIMO's ability to walk like we do, it can also understand preprogrammed gestures and spoken commands, recognize voices and faces and interface with IC Communication cards. ASIMO has arms and hands so it can do things like turn on light switches, open doors, carry objects, and push carts.

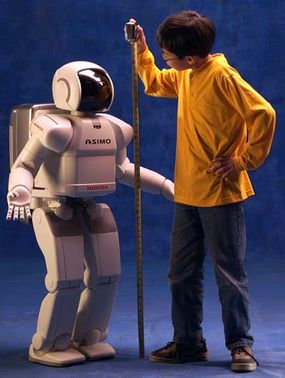

Rather than building a robot that would be another toy, Honda wanted to create a robot that would be a helper for people -- a robot to help around the house, help the elderly, or help someone confined to a wheelchair or bed. ASIMO is 4 feet 3 inches (1.3 meters) high, which is just the right height to look eye to eye with someone seated in a chair. This allows ASIMO to do the jobs it was created to do without being too big and menacing. Often referred to as looking like a "kid wearing a spacesuit," ASIMO's friendly appearance and nonthreatening size work well for the purposes Honda had in mind when creating it.

ASIMO could also do jobs that are too dangerous for humans to do, like going into hazardous areas, disarming bombs, or fighting fires.

Advertisement