The kings of old knew the weight of their decisions. They knew their every choice sent ripples through the kingdom and that a single ill-timed decree could trigger a series of unstoppable, cascading events. One choice might guarantee a lasting peace, while a dozen others might lead to their own toppled throne.

And so these kings turned to augurs and wizards -- people who claimed a special knowledge of future events.

Advertisement

"Peer into tomorrow and advise me on today," a king might command. "Reveal to me the effects of my decisions so that I might safely navigate the days, months and years ahead."

But of course for all their sorceries and prayers, the king's advisers possessed no true insight into future events. At best, they merely understood the ebb and flow of politics or public opinion. At worst, they were charlatans.

If only there were a way to test a decision on a separate, identical world -- a complex model of reality in which even the most catastrophic choices played out in mere simulation. A leader could fiddle with a new law or economic policy in the safe isolation of a simulated reality before actually introducing it to citizens. Businesses could gauge public interest in a new product. Designers could flawlessly forecast next season's fashion trends.

No longer the domain of imagined fantasy, such simulations are now within our grasp, thanks to modern data mining and computer technology. In fact, the international team of scientists with the Future Information and Communication Technologies (FutureICT) Project intends to build it.

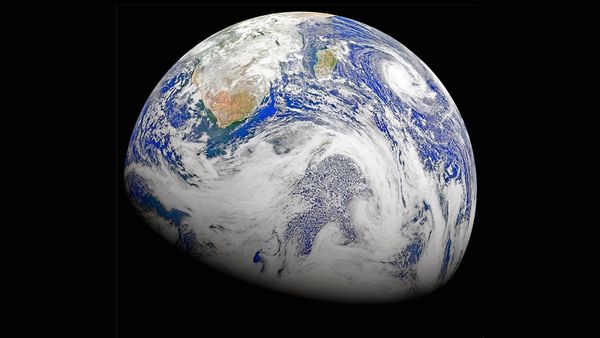

They call it the Living Earth Simulator and, as we'll discuss in this article, FutureICT aims to simulate every aspect of the world around you, from Wall Street and the Paris catwalks, to thriving jungle ecosystems and the darkest ocean depths.

Advertisement